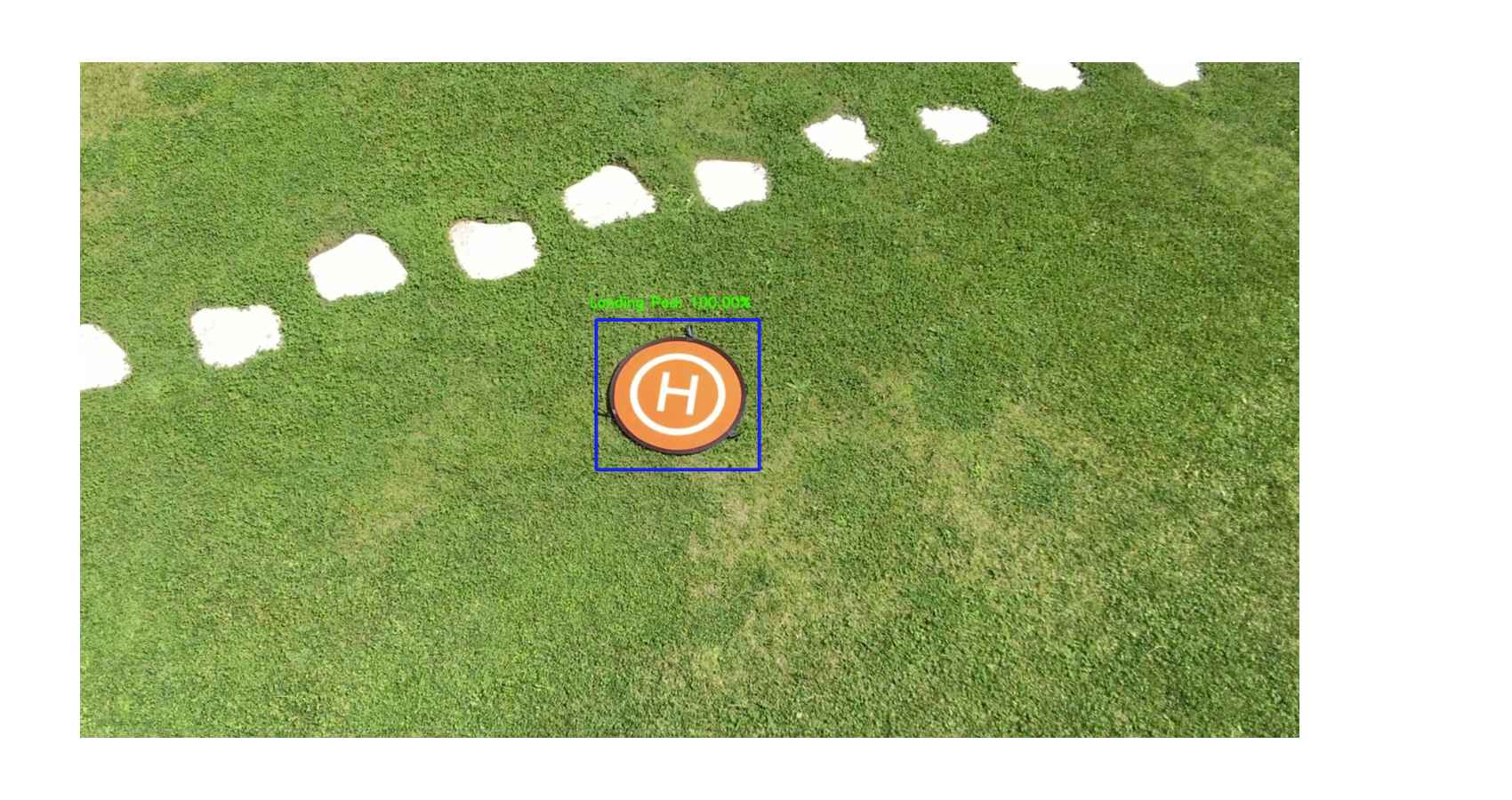

In recent years, artificial intelligence (AI), machine learning (ML), and deep learning (DL) have gained significant popularity in both academic contexts and industrial applications. These technologies can achieve extraordinary performance, improving service quality compared to traditional solutions. For example, computer vision applications widely employ DL algorithms due to their exceptional results in image classification and recognition.

However, such algorithms are characterized by high computational demands, which limit their use to powerful devices without memory and energy constraints. This represents a challenge when using deep learning in Internet of Things (IoT) systems, where simple devices with limited computational and energy resources are connected.

Considering the enormous progress of embedded devices following Moore’s law, it is now reasonable to integrate ML functionalities and ubiquitous intelligence into end devices with limited resources. Consequently, many researchers have focused their attention on this gap to make IoT end nodes intelligent and implement the TinyML paradigm.

The value of TinyML: This technology enables intelligence even in small, low-cost, and low-power devices, offering the typical advantages of edge computing.

On the other hand, implementing TinyML algorithms introduces several challenges, including:

The main advantages are:

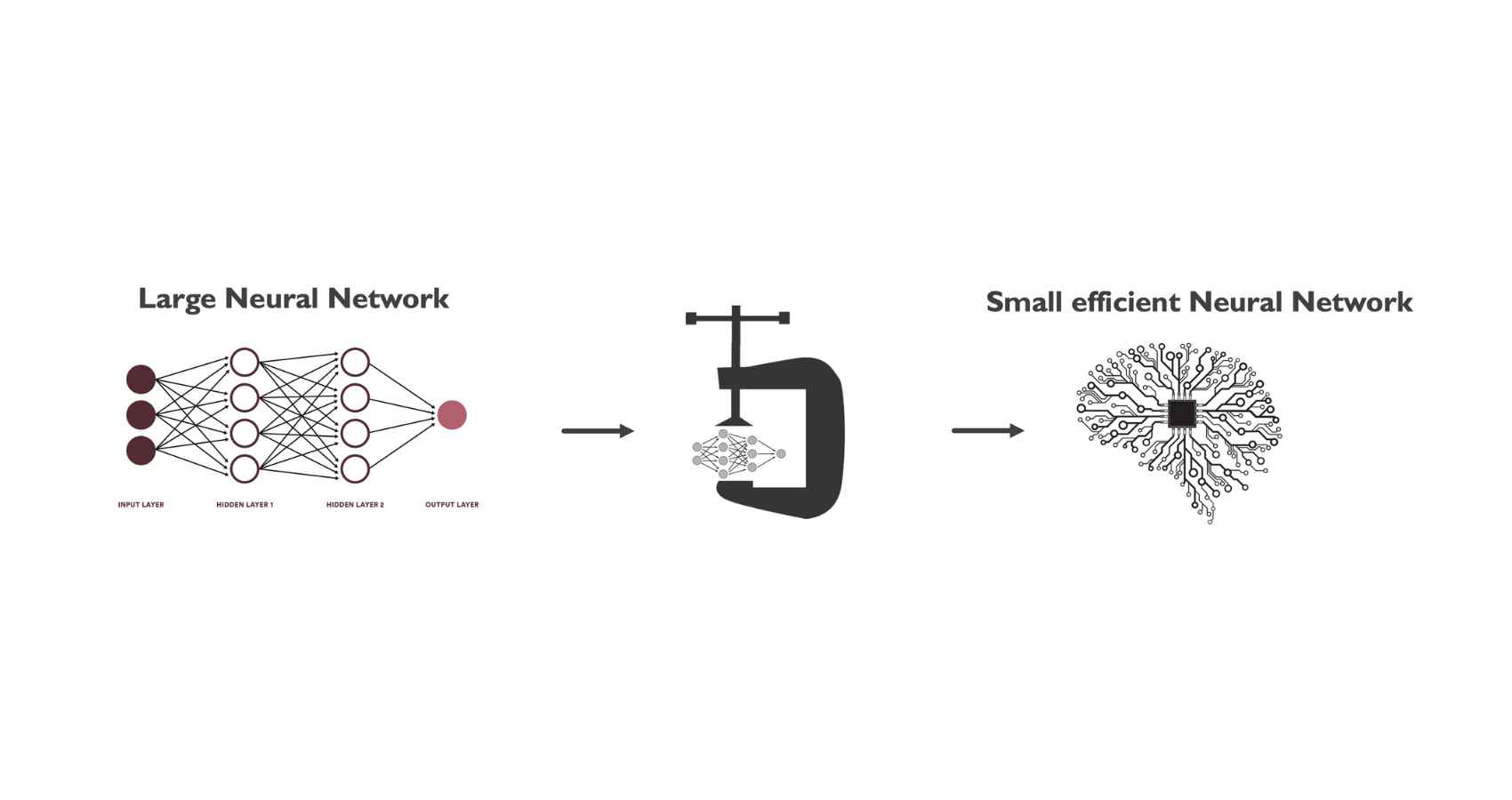

To fully exploit all these advantages, it is essential to develop ML models that are aware of the hardware used (hardware-aware). This introduces the main challenge of TinyML: implementing complex ML models, such as deep neural networks (DNNs), on end devices with limited computational capabilities.

Fortunately, there are solutions for model compression and optimization that allow complex models to be implemented on resource-constrained devices. However, these techniques introduce performance degradation; therefore, it is essential to optimize models while maintaining acceptable quality of service. For this reason, researchers are actively working in this field to find solutions capable of drastically compressing models without significantly compromising performance.